Installation view, Martine Syms, Grand Calme, Sadie Coles HQ, London, 06 September – 20 October 2018, Copyright Martine Syms, courtesy Sadie Coles HQ, London, Photography: Robert Glowacki

Technology is not neutral. That is the theme that reverberates throughout this powerful new exhibit at de Young Museum. With twelve rooms featuring a range of different mediums, Uncanny Valley: Being Human in the Age of AI brings a diverse perspective to critical questions about how technology, specifically artificial intelligence and machine learning, both reflect and impact our society and what role art can play in shaping discourse regarding futuristic concerns.

Technology is not neutral. Computers are not neutral. Data mining is not neutral. Facial recognition is not neutral. All of these things that increasingly pervade our culture, and which will shape society moving forward, are designed by human beings. Humans are biased. Humans working in the tech field, earning tech salaries, are privileged in ways that lend themselves to specific biases, ones that can and do have a huge impact on the rest of society. We know this. And yet, as computers take over more and more of our lives we continue to allow these biases to persist within the technology.

Zach Blas, The Doors, 2019 installation view, Edith-Russ-Haus für Medienkunst, Oldenburg, Germany, Courtesy of the Artist & Edith-Russ-Haus für Medienkunst

Uncanny Valley begins with a powerful room (Zach Blas, The Doors) that serves as a portal to the rest of the exhibit as well as a terrific foundation for the conversations to take place as you move through the displays. In the center of the room is a glass cabinet showcasing a series of nootropics with names like Prodigy, Unfair Advantage, and Infinite. Though familiar with the use of so-called Smart Drugs, I had to research to find out if these were all real products - showcased all together they seem beyond ridiculous - and yet they all appear to be actual supplements sold on the market today. The case is surrounded by faux plants, designed to mimic the gardens of many of Silicon Valley’s campuses. This in turn is surrounded by visual imagery and soundscapes inspired by the 1960s counterculture. How did the Bay Area go from psychedelic sixties anti-corporate, anti-government use of LSD for creative and spiritual expansion to the 21st century tech-heavy use of microdosing and brainhacking with supplements? And perhaps more relevant to this exhibit, when we look at the similarities and differences between fifty years ago and today, what can we imagine as we project into the future another fifty years?

There are twelve rooms in this exhibit, some with multiple art pieces. They include audio, video, digital interactive art, paintings, found objects, sculpture, and more. What might be most critical in this exhibition is the series of questions the work asks of the viewer.

In What Ways is AI Already Dangerous?

Lynn Hershman Leeson, Video still from Shadowstalker, 2019, Dual channel video installation, 12 min, Lynn Hershman Leeson

One key question resonating throughout the exhibit is, “what are some of the dangers of artificial intelligence that are not only problematic for the future but also of concern today?” For example, Lynn Hershman Leeson’s Shadowstalker asks you to enter your email address into a computer screen upon which it instantly pulls up as much information about you as it can find and displays it for the whole room to see. Where you live, what your social media handles are, who else you know in the system … it’s all the computer’s “fingertips.” Of course, there are laws in place about the use and display of such stuff. The museum makes a point of letting you know they don’t store this information. And the key information about you, such as an exact address, is redacted on the screen. Nevertheless, it instantly reflects back to you how carelessly you might give out your personal information without any regard for who will use it and how.

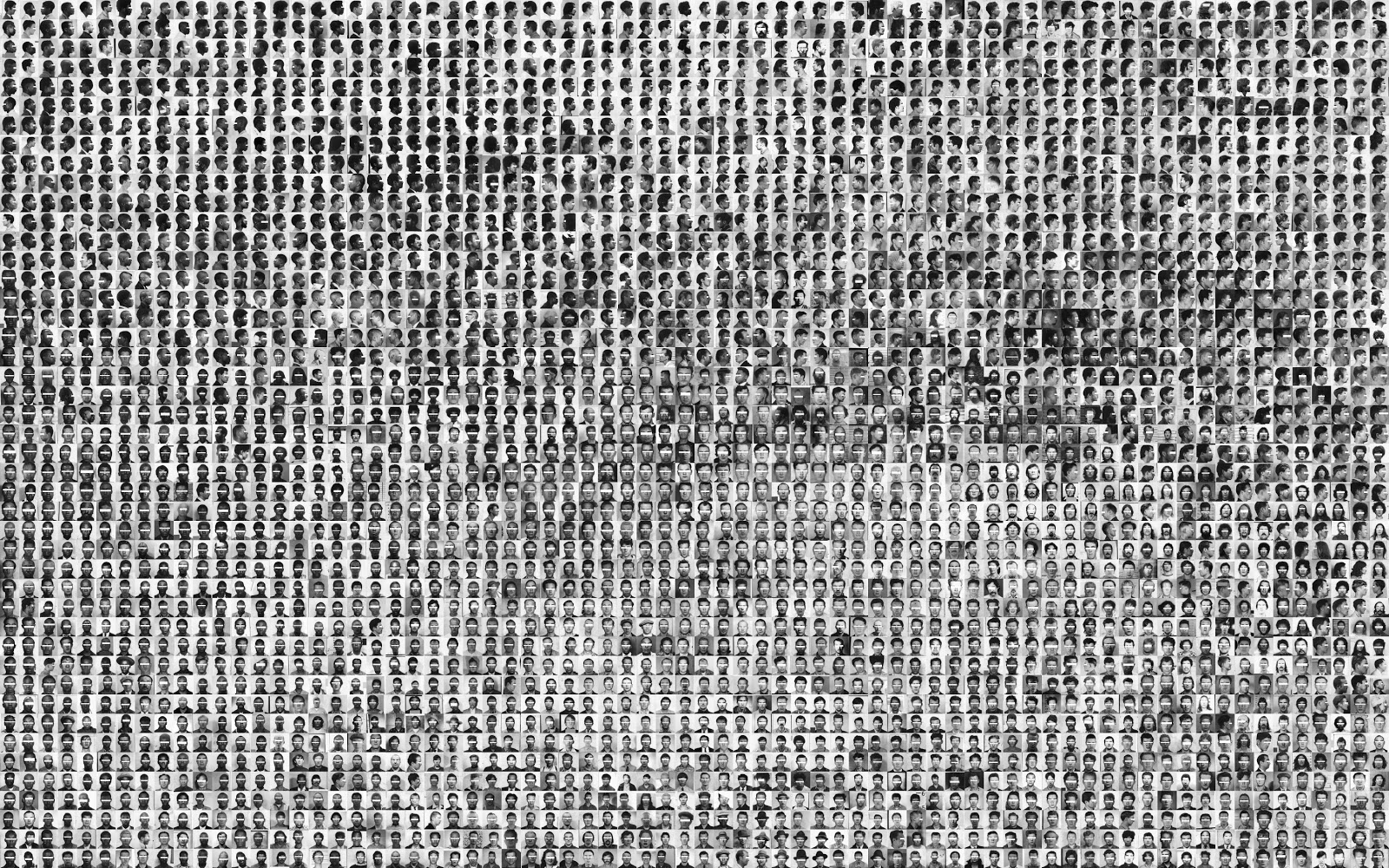

Trevor Paglen, They Took the Faces from the Accused and the Dead... (SD18), 2019 Silver gelatine print, pins, 3240 individual images: 225 × 360 in. (571.50 × 914.40 cm), © Trevor Paglen, Courtesy of the Artist and Altman Siegel, San Francisco

Similarly, the Stolen Faces display questions the use of facial recognition in predicting crime and determining who might be a criminal. The striking wall display consists of photo upon photo of black-and-white mug shots which the audio tour reminds you were taken of accused and convicted criminals without their express consent as part of the booking process this society has accepted. Artificial Intelligence systems then study this data set to get a sense of what human face might be a threat. Think about that carefully: you could be walking down the street one day when a camera sees your face, determines that you match the profile of a likely criminal, and alerts the authorities even though you’ve never committed a crime. We all know that certain people are more likely than others to be arrested in our society, so the existing mugshots in the system reinforce that bias. AI multiplies and magnifies that bias with predictive technology. The audio tour reminds you further: “Data is not neutral.” They refer to this as “Dirty Data.”

What Does It Mean To Be Human?

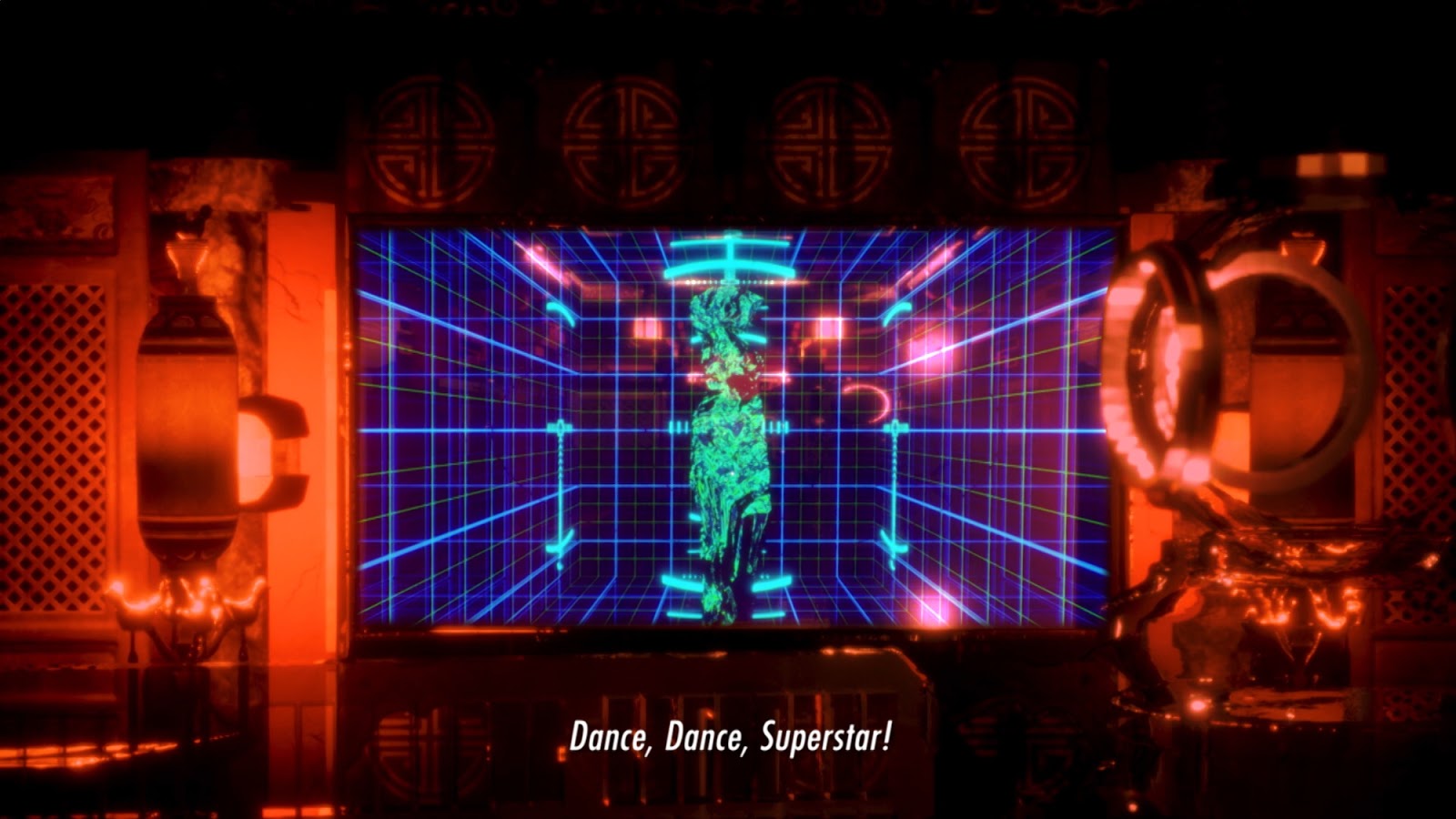

Lawrence Lek, AIDOL [HD video still], 2019, HD video, stereo sound, duration: 85 mins, © Lawrence Lek, courtesy Sadie Coles HQ, London

If we create machines that can look and act like human beings then what does it mean to be human? There are several different takes on this question throughout the exhibit. In Lawrence Lek’s AIDOL, we see a film about an AI creature named Geo who wants to become an artist. We also see a human singer named Diva who suffers from artist’s block and can’t seem to write her next song. Ultimately, Geo mines her past songs for all of the best parts and uses computer technology to come up with a new song that is a huge it. We like to believe in the idea that art is unique to humanity, that the drive and ability to create is a human trait that we will retain and machines will never obtain. This video questions that assumption. (Of course: there are already computers-as-artists.)

The final display in the exhibit, Ian Cheng’s Bag of Beliefs, allows for interaction with a simulated creature. Notably there are many interactive components to the entire exhibit; use QR codes and texting to engage digitally with the displays. In this one, you can offer gifts to the animal and he can opt to accept them or not. This serpent-like creature weaves around on a screen and there is something about his design that inevitably makes you think, “he is so cute.” This speaks to the title of the exhibit and how tech creators today utilize what we know about human psychology to create virtual and robotic creatures that speak to our humanity.

Christopher Kulendran Thomas in collaboration with Annika Kuhlmann, Being Human, 2019, Installation view: Ground Zero at Schinkel Pavillon. Image: Andrea Rossetti

In contrast, the exhibit Being Human utilizes video that combines “real humans” with “deep fake” avatars in a way that reflects how unnerving it is to us when avatars look strikingly similar to - but aren’t quite exactly the same as - human beings. That video takes us on a journey through Sri Lanka, speaking to the civil war atrocities there, and juxtaposes that with “deep fake” celebrities. This juxtaposition begs the question again, “what is human?” In particular, looking at the terrible things allowed to happen in Sri Lanka in the 21st century - who is the “human” we mean when discussing “human rights” and who gets to decide that?

How Has and Will Technology Change Our Social Relationships?

Ian Cheng, Installation view: BOB, Central Pavilion, Giardini, Venice Biennale, Venice, 2019, Copyright Ian Cheng, Courtesy the artist and Gladstone Gallery, New York and Brussels, Photography by: Andrea Rossetti

The underlying premise of this exhibit is that technology has fundamentally changed our relationship with each other and the world, changing our society in ways that we may not yet realize. Social media and technology both connect and separate us in new ways that didn’t exist before these innovations.

We know that technology is changing our brains. However, we do not know the ways in which our brains are changing. Perhaps growing up with this technology will reduce or eliminate our long-term memory, which sounds terrible, but if technology becomes a depository for our collective memory then it could free the brain up to evolve in new ways that we aren’t even able yet to conceive of.

And that brings us full circle back to the first room. We can change our brains with psychedelic drugs and nootropics. We can change our brains with technology and interactive art. The exhibit asks that instead of allowing this to happen to us, we make conscious decisions about how we want this to happen. It asks that we include the voices of the under-privileged, of artists, and of a variety of people working in fields other than technology to bring up the difficult questions so that we can shape the future in a way that doesn’t just magnify the problems of the past.

Uncanny Valley: Being Human in the Age of AI is one exhibit in the museum’s larger ongoing initiative to “engage with urgent ideas and issues that define life in the present and in the future.” The exhibit runs from February 22, 2020 – October 25, 2020. It is included in the price of general admission which is $15 for adults, $12 for seniors, $6 for students, and free to Members as well as youth age 17 and under. General admission is free to everyone on the first Tuesday of each month and free every Saturday to residents of any Bay Area county. The museum is open Tuesday - Sunday 9:30 am–5:15 pm.

Comments (2)

I did the interactive exhibit where you discover information available on you via email and it was great! I had nothing come up!!

I saw this exhibit before COVID lockdown started and I can't believe how quickly we relied on technology when it can be so scary.